For effective cloud management in today’s digital world, organizations demand speed, security, and efficiency. However, many still rely on a manual configuration approach known as ClickOps, using the Azure portal for deployments. While easy to start with, ClickOps can result in slower deployment times, misconfigurations, and limited scalability. The solution is an Infrastructure as Code (IaC) and DevSecOps mindset.

This blog covers:

- The six key challenges of ClickOps

- How IaC and DevSecOps solve these challenges

- Practical steps to secure and scale your Azure environment

The challenges of ClickOps (and their DevSecOps solutions)

According to the Global DevSecOps report from July 2024, only 56% of organizations have implemented DevSecOps practices. This leaves many relying on ClickOps, manually deploying infrastructure via the Azure portal GUI.

ClickOps offers a low entry barrier, making it tempting for teams to quickly set up infrastructure without any governance framework. While this approach is easy to get started with, it will create growing technical debt and operational challenges over time.

Below, we explore the six biggest challenges of ClickOps and how IaC and DevSecOps can overcome them.

1) Technical debt with hidden costs

ClickOps may seem like an easy way to deploy resources in Azure. After all, it is just a few clicks in the portal, right? But as organizations scale, this approach becomes a costly bottleneck.

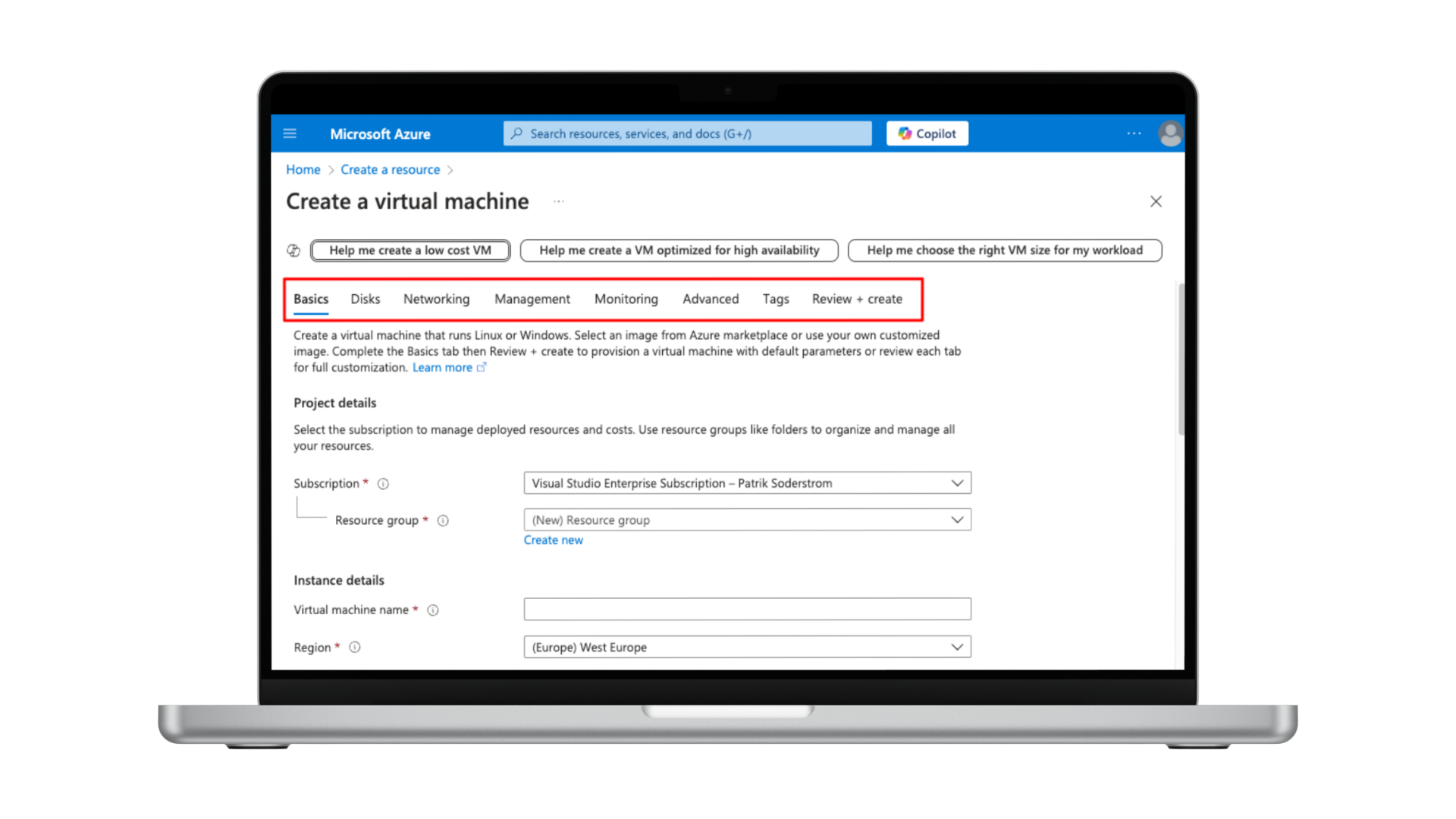

For example: Deploying a virtual machine in the Azure portal requires navigating eight tabs, each with important information that has to be filled in correctly before the resource can be deployed. While manageable for a single virtual machine, it becomes increasingly difficult to ensure consistent and error-free entries for larger deployments.

Over time, the limitations of ClickOps become painfully clear. Routine tasks, such as adding additional disks to multiple virtual machines with specific configurations are time-consuming and repetitive processes.

The solution: Automating deployments with IaC reduces technical debt

With DevSecOps and Infrastructure as Code (IaC), deployments are automated and deployed according to the defined security policies. Adjustments like changing or updating resources such as virtual machines, is a matter of updating parameters and initiating the deployment pipeline.

2) Slower time-to-market with repetitive tasks

ClickOps involves a lot of manual and repetitive work, and increases the risk for human error. Setting up multiple resources with similar setup, slows time-to-market, especially in cloud environments where speed is crucial.

The solution: Streamlined deployment with reusable IaC templates

IaC provides reusable libraries and catalogs of pre-configured resources. Teams can deploy environments faster and use more cost efficient setups of cloud resources.

3) Managing multiple environments

ClickOps makes it difficult to maintain consistency across different environments, such as test and production. Manual setup often requires manual checks to ensure that environments are identical, which is not only inefficient but also prone to mistakes.

The solution: Consistency through IaC automation

Infrastructure as Code enables teams to use a test environment as a blueprint for other types of environments such as production. The blueprint avoids manual comparison and ensures that both environments are identical.

The same applies with changes on infrastructure. A change can be prepared, tested and validated in a test environment, reducing deployment stress and errors in the production environment.

4) Lack of collaboration and version control

In ClickOps, changes to infrastructure often lack version control and transparency. It’s hard for teams to coordinate effectively and track who made which changes.

The solution: IaC as the single source of truth

Even when working with small teams, IaC acts as the single source of truth. It describes the actual configuration and setup of the cloud environment. Changes are also tracked on who, what and when they were applied. Working with Pull Requests on GIT can enforce teams to request changes before they are applied to the actual environment, creating an extra layer of validation.

5) Disaster recovery limitations

In case an environment would be tampered or due to human error be partly or completely corrupt, ClickOps offers no realistic way to rebuild it. Can you imagine having to set up hundreds of Azure resources manually in another region? 🥲

The solution: Building resilience with DevSecOps:

IaC and DevSecOps enable you to recreate the complete environment from source code.This approach results in a shorter Recovery Time Objective (RTO) and Recovery Point Objective (RPO) during disaster recovery.

6) Security and compliance risks

It is true that configuring new resources through ClickOps is governed by your established framework of Azure policies. Nevertheless, it is important to note that these checks occur only during or after the resource has been created.

The solution: Ensuring compliance before deployment

Having the configuration of your cloud infrastructure in code allows compliance and security scans directly on the source. Any infrastructure changes are audited, and any non compliances are flagged prior to the actual deployment. Resolving all noncompliance before actual deployment ensures the security posture remains intact.

Enforcing an approach where only the CI/CD is given permission to change the infrastructure creates an additional layer of security defense.

ClickOps out, DevSecOps in

To overcome these challenges, organizations should implement Infrastructure as Code (IaC) and DevSecOps. Together, they automate entire deployments while ensuring security best practices are followed.

Choosing the right IaC language

When selecting an IaC language, there are two strong options on the table:

- Bicep: Azure’s native language, seamlessly integrated with Azure and directly backed by Microsoft. New Azure services are immediately supported in Bicep.

- Terraform: A cloud-agnostic option, widely supported across environments. A popular choice for organisations with multi-cloud needs.

While Terraform adoption for new Azure services is fast, it is not always available on the first day of release. The general recommendation is to choose Terraform if you are automating deployments for virtualization environments, multi-cloud scenarios, or on-premises workloads. Microsoft provides an excellent comparison, which is available here.

💡Tip: Tools like Aztfexport can export your current Azure environment into Terraform code. This code can then be reviewed, stored in a repository, and used to provision resources consistently. The environment can be locked to prevent portal-based changes, ensuring all modifications occur through IaC, avoiding configuration drift.

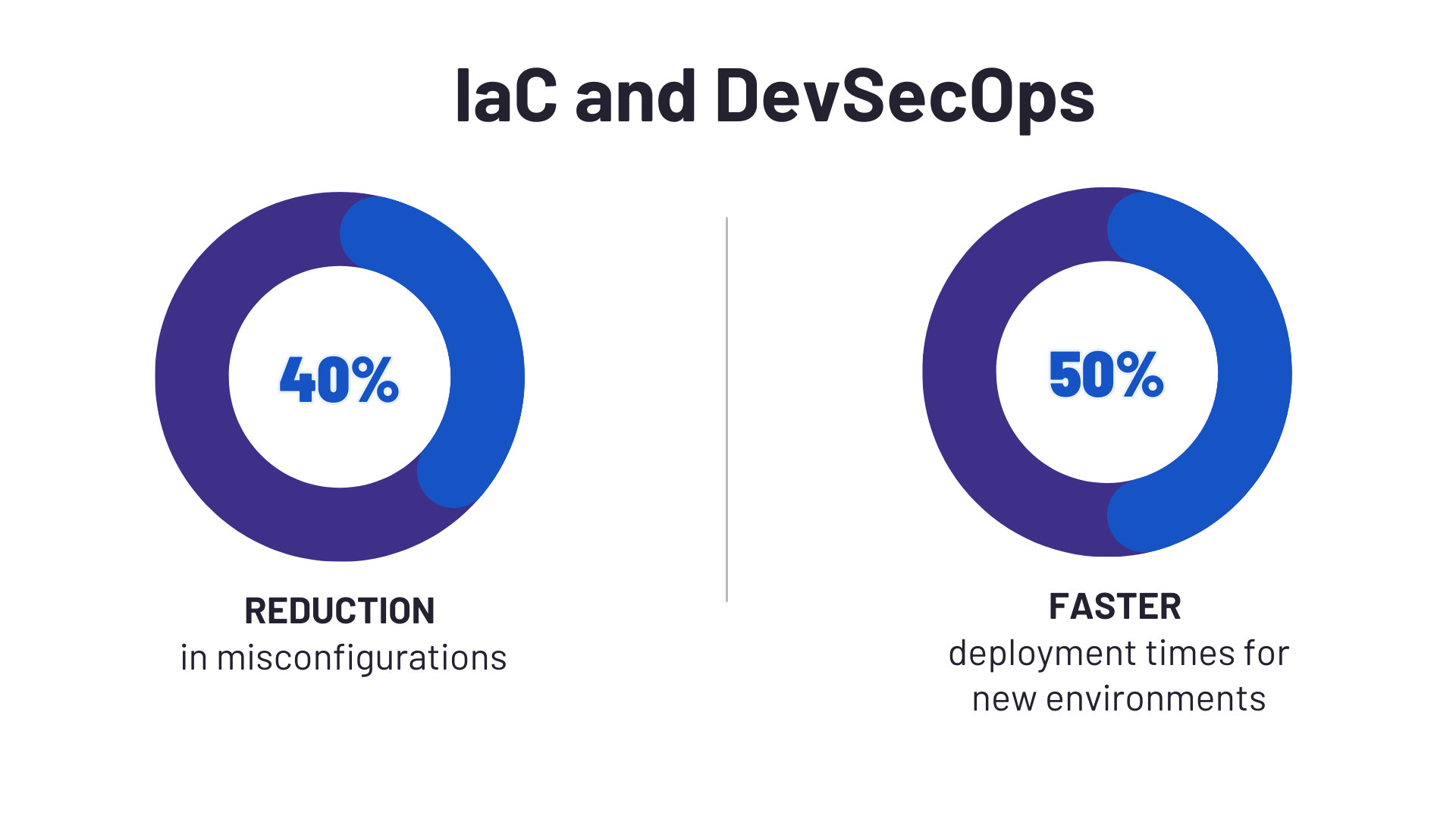

IaC and DevSecOps approach: success story at ACA Group

For one of our clients, we reverse-engineered their existing setup into Terraform code, creating a reusable template. This IaC and DevSecOps approach reduced misconfigurations by 40% and cut deployment times for new environments by 50%.

At the ACA Group, every Azure environment we manage follows IaC and DevSecOps principles. Here's how we approach new and existing environments:

- Greenfield approach (starting from scratch): Establishing a new landing zone from scratch is straightforward. We utilize governance frameworks, templates, and pipelines fully aligned with the Microsoft Cloud Adoption Framework to ensure compliance and efficiency.

- Brownfield approach (optimizing existing environments): Existing setups require a more customized strategy. We use tools like Aztfexport, integrated into our existing workflows, to reverse-engineer the environment into IaC templates and ensure a seamless transition.

Preparing for the DevSecOps transformation

Transitioning to DevSecOps involves more than just technical change, it is a shift in mindset. Organizations have to evolve internal policies and processes to support IaC practices and shift to an efficient and secure cloud environment.

At the ACA Group, we specialize in guiding organizations through this transformation. Whether you’re starting fresh or optimizing an existing Azure environment, we’re happy to help.

➡️ Ready to move beyond ClickOps?

Or talk to our expert Peter right away!

What others have also read

CloudBrew has always been a highlight on our calendar, but the 2025 edition felt different. Perhaps it was the timing. Just the month prior, November 2025, the Azure Belgium Central region finally opened its doors. ACA has always operated from the he

Read more

Better uptime, lower costs, and avoiding vendor lock-in. These are three of the reasons why our customers opt for a multicloud strategy. Our Cloud Project Manager Roel Van Steenberghe explains what such a strategy entails and what the advantages of G

Read more

In the complex world of modern software development, companies are faced with the challenge of seamlessly integrating diverse applications developed and managed by different teams. An invaluable asset in overcoming this challenge is the Service Mesh.

Read moreWant to dive deeper into this topic?

Get in touch with our experts today. They are happy to help!

Want to dive deeper into this topic?

Get in touch with our experts today. They are happy to help!

Want to dive deeper into this topic?

Get in touch with our experts today. They are happy to help!

Want to dive deeper into this topic?

Get in touch with our experts today. They are happy to help!