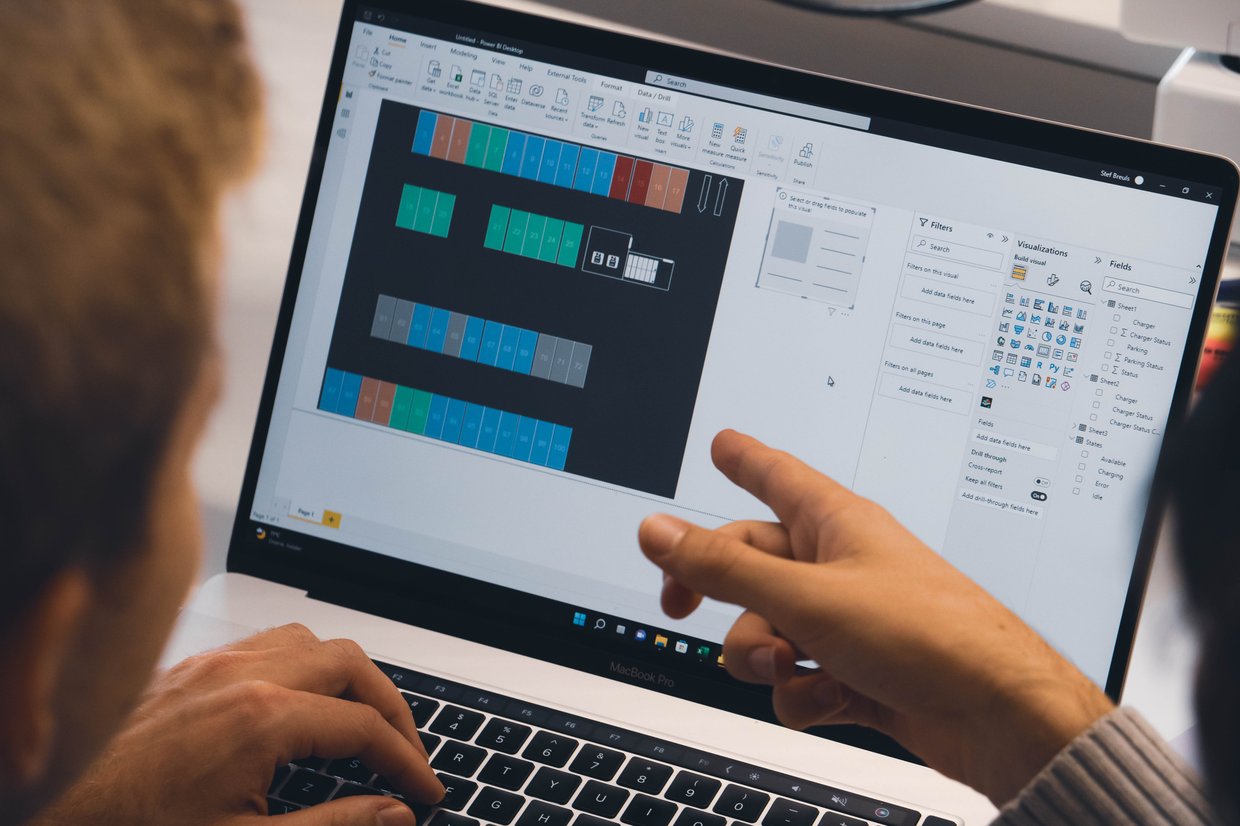

Nowadays, there is constant talk about the importance of a 'real-time enterprise' that can immediately notice and respond to any event or request. So, what does it mean to be ‘real-time’? Real-time technology is crucial for organizations because real

Read more