In Belgium, there’s a saying, “Every Belgian is born with a brick in their stomach,” reflecting the nation's deep-rooted drive to build homes that last. But this principle doesn’t just apply to houses, it’s equally true for your cloud infrastructure.

Without a strong foundation, your Azure workloads risk becoming unstable, inefficient, or even vulnerable. That’s where Microsoft’s Well Architected Framework (WAF) comes in. Read on to discover how this framework’s five pillars can turn your cloud workload into a structure built to last.

What is the Well Architected Framework (WAF)?

The Well Architected Framework helps you build secure, high-performing, resilient, and efficient infrastructure applications on Azure. By following the guidelines in this framework, you ensure that your cloud infrastructure is following the recommendations and standards set by Microsoft.

This framework consists of five pillars:

(Image source: https://learn.microsoft.com/en-us/azure/well-architected/)

Each of these pillars offer valuable guidance and best practices, but they also involve tradeoffs. Every decision - whether financial or technical - comes with its own set of considerations. For example, while securing workloads is important, it comes with added costs and potential technical implications.

Let’s take a closer look at each of the five pillars of the Well Architected Framework.

Reliability

Failures are inevitable, no matter how much we wish otherwise. That’s why designing systems with failure in mind is crucial. A workload must survive failures while continuing to deliver services without disruption.

This requires more than just designing your workload for failures, it also means setting reliable recovery targets and conducting sufficient testing.

First you need to identify the reliability targets. After all, making everything Geo redundant is great - but comes with a cost for the business. Once your reliability targets are identified, the next step is to map redundancy level to the Azure technology. Only considering the compute parts of an application is not enough, you also need to take into account the supporting components, such as network, data and other infrastructure tiers.

Deep dive into the Microsoft checklist: https://learn.microsoft.com/en-us/azure/well-architected/reliability/checklist

Security

All workloads should be built around the zero-trust approach. A secure workload is resilient to attacks while ensuring confidentiality, integrity and availability. Just like availability, confidentiality and integrity come with multiple options - each with its own impact on cost and complexity. For instance, how important is Encryption in Use? Answering this question can significantly shape your solution.

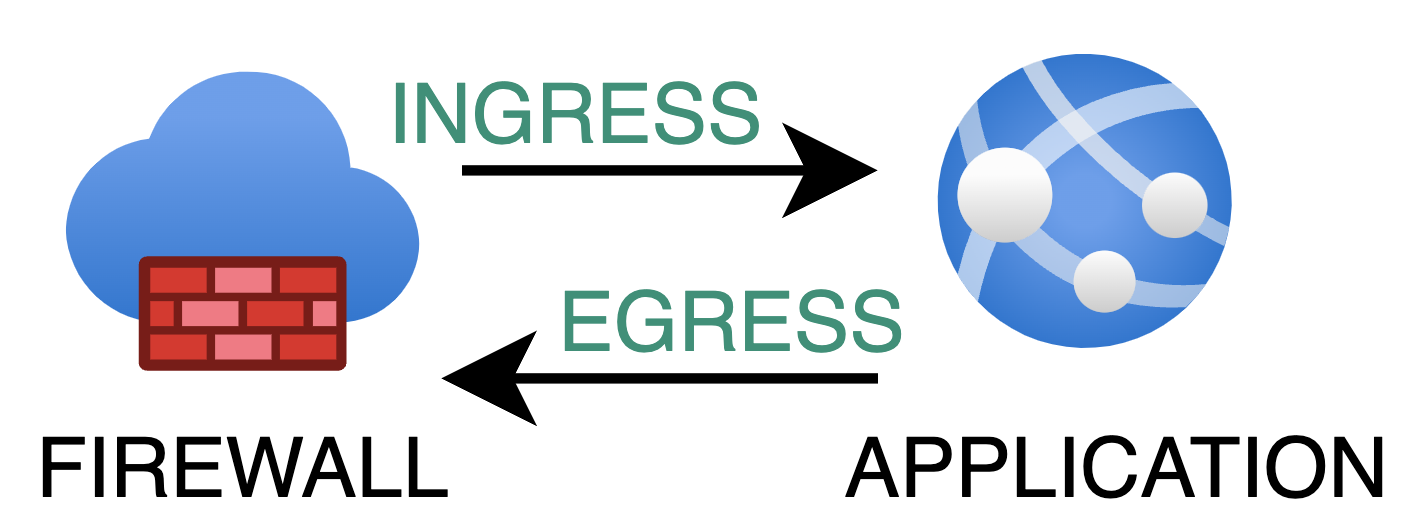

Security isn’t a one-layer fix; it must be applied at every level. While it’s standard practice to route all incoming (ingress) traffic through a firewall, the same must be done for outgoing (egress) traffic. Ensuring all outgoing traffic is approved and routed through a firewall is essential.

There are additional ways to secure communication within your Azure environment. Using Private Endpoints is essential for secure communication between application components, offering better protection compared to Service Endpoints, which are cheaper but carry the risk of data exfiltration.

Don’t overlook Azure DDoS Protection either. DDoS attacks can target any publicly accessible endpoint, potentially causing downtime and forcing your environment to scale up and out. This not only slows down your workload but also leaves you with a large consumption bill.

The comprehensive checklist from Microsoft is available here: https://learn.microsoft.com/en-us/azure/well-architected/security/checklist

Cost Optimization

Any architecture design and workload is driven by business goals. The focus of this pillar is not about cutting costs to the minimum. It’s about finding the most cost-effective solution.

This pillar aligns closely with the FinOps framework which we have covered here. A good first step is to create a cost model to estimate the initial cost, run rates, and ongoing costs.

This model provides a baseline to compare the actual cost of the environment on a daily basis. The work doesn’t stop here, it’s essential to set up anomaly alerts that notify you when the expected baseline is exceeded.

It’s also important to optimize the scaling of your application. Can your resources scale both out and up? Which approach is the most cost-effective and delivers the best results? Certain applications may hit a performance plateau when scaling up, which is where you add cpu and memory. Perhaps the application can only handle a minor extra load when you reach 256GB of memory. Instead, it may be more beneficial to scale out by adding more instances rather than simply scaling up with additional compute power.

The comprehensive checklist from Microsoft is available here: https://learn.microsoft.com/en-us/azure/well-architected/cost-optimization/checklist

Operational Excellence

The core of Operational Excellence are DevOps practices, which define the operating procedures for development practices, observability and release management. One key goal in this pillar is to reduce the chance of human error.

It’s important to approach implementations and workload with a long term vision. Take the distinction between ClickOps and DevOps as an example. While it's tempting to quickly set up resources using the Azure Portal (ClickOps), this builds up technical debt. Instead, adopting a DevOps approach helps you build a more sustainable, efficient, and automated workflow for the future.

Read our in depth blog about moving from ClickOps to DevOps for more details.

Always use a standardized Infrastructure as Code (IaC) approach. Formalize the way you handle operation tasks with clear documentation, checklists, and automation. This ties into what we covered under Resiliency, but focuses on processes. Make sure you have a strategy to address unexpected rollout issues and recover swiftly.

The comprehensive checklist from Microsoft is available here: https://learn.microsoft.com/en-us/azure/well-architected/operational-excellence/checklist

Performance Efficiency

This pillar is all about your workload’s ability to adapt to changing demand. Your application must be able to handle increased load without compromising the user experience.

Think about the thresholds you use to scale your application. How quickly can Azure resources scale up or out ? Consider traffic patterns as there may be high load during certain hours, like in the morning. Perhaps you can schedule scaling in advance to ensure resources are available when needed.

The overall recommendation is to make performance a priority at every stage of the design. As you move through each phase, you should regularly test and measure performance. This will provide valuable insights, helping you identify and address potential issues before they become problems.

The checklist from Microsoft is valuable: https://learn.microsoft.com/en-us/azure/well-architected/performance-efficiency/checklist

Start optimizing your Azure workload today!

Our team of experts is ready to assist you in applying the Well Architected Framework to your Azure environment. Let’s ensure your workload is secure, cost-optimized, and ready for the future.

What others have also read

CloudBrew has always been a highlight on our calendar, but the 2025 edition felt different. Perhaps it was the timing. Just the month prior, November 2025, the Azure Belgium Central region finally opened its doors. ACA has always operated from the he

Read more

Better uptime, lower costs, and avoiding vendor lock-in. These are three of the reasons why our customers opt for a multicloud strategy. Our Cloud Project Manager Roel Van Steenberghe explains what such a strategy entails and what the advantages of G

Read more

In the complex world of modern software development, companies are faced with the challenge of seamlessly integrating diverse applications developed and managed by different teams. An invaluable asset in overcoming this challenge is the Service Mesh.

Read moreWant to dive deeper into this topic?

Get in touch with our experts today. They are happy to help!

Want to dive deeper into this topic?

Get in touch with our experts today. They are happy to help!

Want to dive deeper into this topic?

Get in touch with our experts today. They are happy to help!

Want to dive deeper into this topic?

Get in touch with our experts today. They are happy to help!