In my previous blog post, I emphasized the importance of gathering data. However, in some cases you might not have any suitable data available. You might have raw data, but perhaps it’s unlabeled and unfit for machine learning. If you don’t have the financial resources to label this data, or you don’t have a product like reCAPTCHA to do it for free, there’s another option.

Since Amazon launched its Amazon Web Services cloud platform as a side-business over a decade ago, it has grown at a tremendous pace. AWS offers now more than 165 services, giving anyone, from startups to multinational corporations, access to a dependable and scalable technical infrastructure. Some of these services offer what we call pre-trained machine learning models.

Amazon’s pre-trained machine learning models can recognize images or objects, process text, give recommendations and more. The best part of it all is that you are able to use services based on Deep Learning without having to know anything about machine learning at all. These services are trained by Amazon, using data from its websites, its massive product catalog and its warehouses.

The information on the AWS websites might be a bit overwhelming at first. That’s why in this blog post I would like to give an overview of a few services using Amazon’s machine learning models, which I think can easily be introduced into your applications.

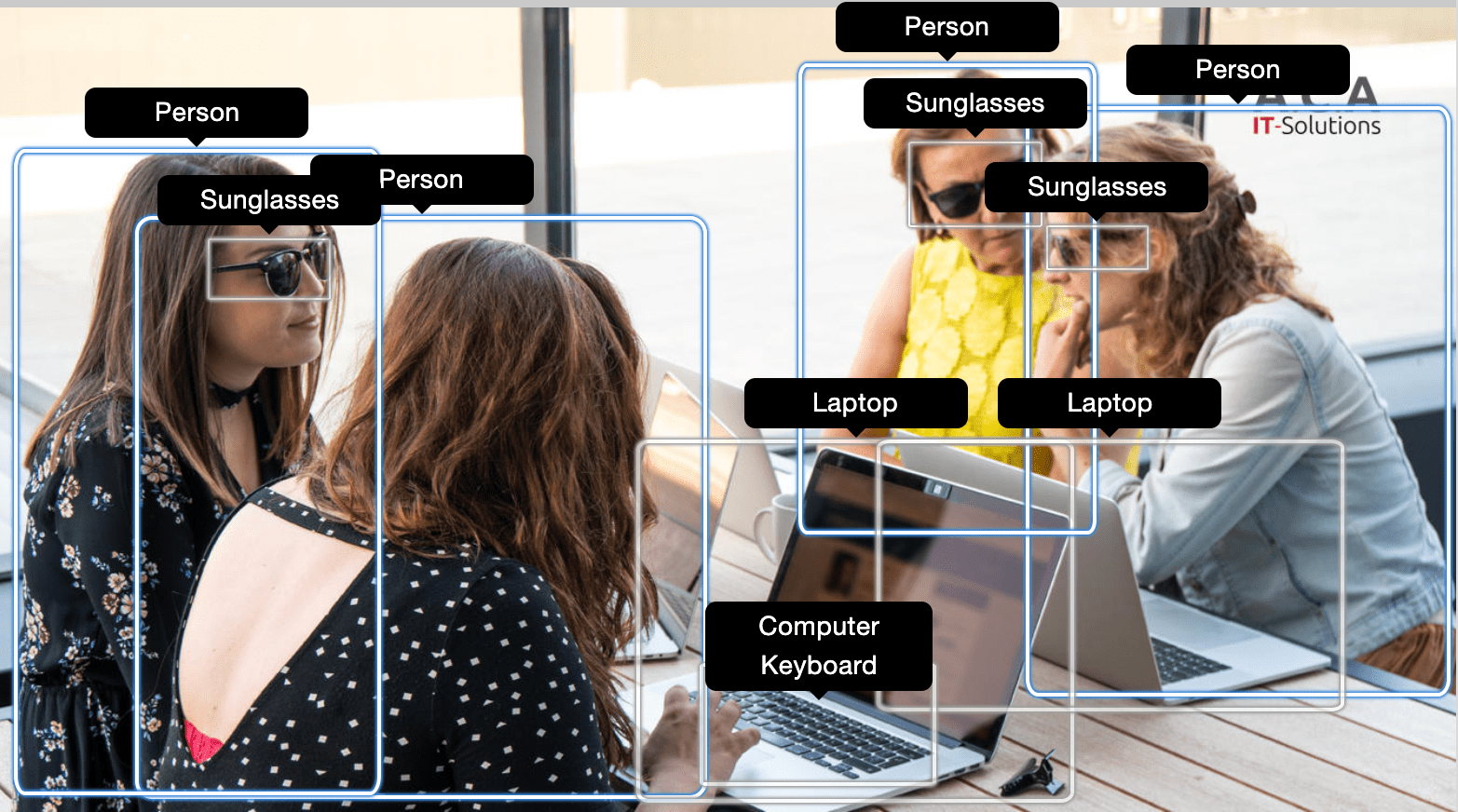

Computer vision with Rekognition

Amazon Rekognition is a service that analyzes images and videos. You can use this service to identify people’s faces, everyday objects or even different celebrities. Practical uses are adding labels to videos, for instance following the ball during a football match, or picking out celebrities in an audience.

Since Rekognition also has an API to compare similarities between persons in multiple images, you can use it to verify someone’s identity or automatically tag friends on social media. Speaking of social media: depending on the context of a platform, some user contributions might not be deemed acceptable. Through Rekognition, a social media platform can semi-automatically control suggestive or explicit content, giving the opportunity to blur or deny uploaded media when certain labels are associated with it.

Digitalize archives with Textract

Amazon Textract allows you to extract text from a scanned document. It uses Optical Character Recognition (OCR) and goes a step further by taking context into account.

If your company receives a lot of printed forms instead of their digital counterpart, you might have a few thousand papers you need to digitalize manually. With regular OCR, it’s challenging to detect where a form label ends and a form field begins. Likewise it would be difficult for OCR to read newspapers, when text is placed in two or more columns. Textract is able to identify which group of words belong together, whether it’s a paragraph, a form field or a data table, helping you to reduce the time and effort you need to digitalize those archives.

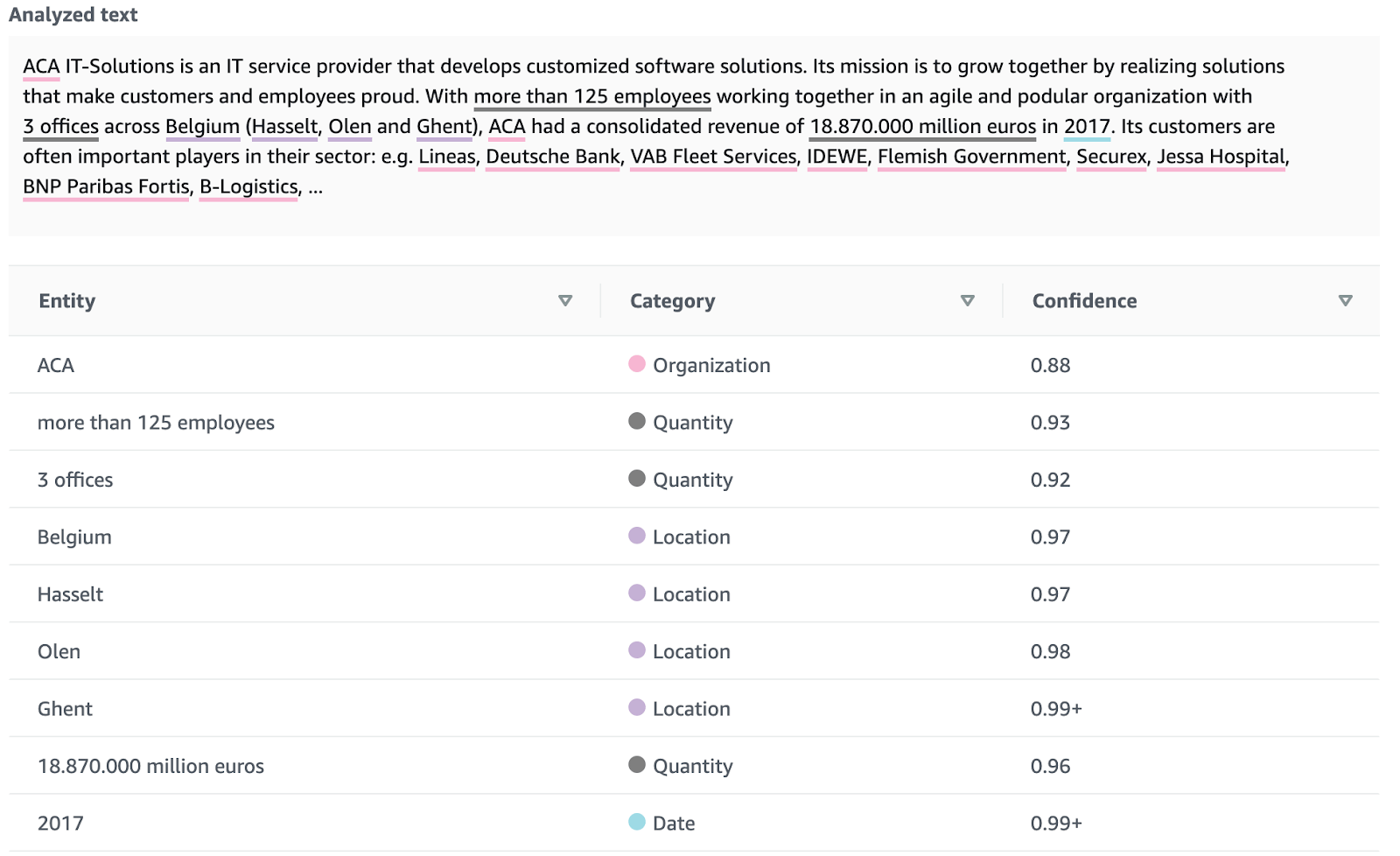

Analyze text with Comprehend

Amazon Comprehend is a Natural Language Processing (NLP) service. It helps you discover the subject of a document, key phrases, important locations, people mentioned and more.

One of its features is to analyze sentiment in a text. This can give you a quick insight in interactions with customers: are they happy, angry, satisfied? Amazon Comprehend can even highlight similar frustrations around a certain topic. If reviews around a certain product are automatically found to be mostly positive, you could easily incorporate this in a promotional campaign. Similarly if reviews are mostly negative, that might be something to forward to the manufacturer.

A subservice of Comprehend called Comprehend Medical, is used to mine patient records and extract patient data and treatment information. Its goal is to help health care providers to quickly get an overview of previous interactions with a patient. By identifying key information from medical notes and adding some structure to it, Comprehend Medical assists medical customers to process a ton of documents in a short period of time.

Take notes with Transcribe

Amazon Transcribe is a general-purpose service to convert speech to text, with support for 14 languages. It automatically adds punctuation and formatting, making the text easier to read and search through.

A great application for this is creating a transcript from an audio file and sending it to Comprehend for further analysis. A call center could use real-time streaming transcription to detect the name of a customer and present their information to the operator. Alternatively, the call center could label conversations with keywords to analyze which issues arise frequently.

One of Transcribe’s features is to identify multiple speakers. This is useful for transcribing interviews or creating meeting minutes without having one of the meeting participants spend extra time jotting everything down.

Multilingual with Translate

When you’re getting reactions from customers on your products, you can translate them into your preferred language, so you can grasp subtle implications of certain words. Or you can extend your reach by translating your social media posts. You can even combine Transcribe and Translate to automatically generate subtitles for live events in multiple languages.

Express yourself with Polly

The Polly service can be considered the inverse of Transcribe. With Polly, you can convert text to speech, making the voice sound as close to natural speech as possible. With support for over 30 languages and many more lifelike voices, nothing is stopping you from making your applications talk back to you.

Polly has some support for Speech Synthesis Markup Language (SSML), which gives you more control on how certain parts of the text are pronounced. Besides adding pauses, you can put emphasis on words, exchange acronyms with their unabbreviated form and even add breathing sounds. This amount of customization makes it possible to synthesize voice samples that sound very natural.

Generating realistic speech has been a key factor to the success of apps like Duolingo, where pronunciation is of great significance. You can read about this particular use case in this blogpost. Bonus: if you don’t feel like reading, you can have it read to you by Polly!

Make suggestions with Personalize

When you look for any product on Amazon’s website, you immediately get suggestions for similar products or products that other customers have bought in combination. It’s mind blowing that out of the millions of items offered by Amazon, you get an accurate list of related products at the same moment the page loads. This powerful tool is available to you through Amazon Personalize. You need to provide an item inventory (products, documents, video’s, …), some demographic information about your users, and Personalize will combine this with an activity stream from your application to generate recommendations either in real-time or in bulk.

This can easily be applied to a multitude of applications. You can present a list of similar items to customers of a webshop. A course provider would be able to suggest courses similar to a topic of interest. Found a restaurant you liked? Here’s a list of similar restaurants in your area. If you can provide the data, Personalize can provide the recommendations.

Create conversations with Lex

Amazon Lex is a service that provides conversational AI. It uses the same Natural Language Understanding technology as Amazon’s virtual assistant Alexa. Users can chat to your application instead of clicking through it. Everything starts with an intent. This defines the intention of the user, the goal we want to achieve for our user. It can be as simple as scheduling an appointment, providing directions to a location or getting a recipe that matches a list of ingredients. Intents are triggered by utterances. An utterance is something you say that has meaning. “I need an appointment with Dr. Smith”, “When can I see Dr Smith?”, “Is Dr. Smith available next week Wednesday?” are all utterances for the same intent: making an appointment. Lex is powerful enough to generalize these utterances so that slight variations can also trigger the correct intent. Finally, in the case of registering an appointment, you need to specify a few slots, pieces of data required for the user to provide in order to fulfill the intent. In the case of the example above, the name of the person you want to see, the time period and perhaps the reason of your visit.

Even though the requirements are pretty simple, everything depends on the quality of the utterances and the chaining of intents. If you don’t have enough sample sentences or the conversation keeps asking information that the user already presented, your user will end up frustrated and overwhelmed.

Predict demand with Forecast

A fairly new service provided by AWS is called Forecast. This service also emerged from Amazon’s own necessity to estimate the demand for their immense product inventory. With Forecast, you can get insight in historical time series data. For instance, you could analyze the energy consumption of a region to project it to the near future. This gives you a probability of what the electricity demand tomorrow would be. Likewise, you might be able to predict that a component of your production facility needs maintenance before it wears out.

Forecast can leverage Automated Machine Learning (AutoML) to find the optimal learning parameters to fit your use case. The quality of this services depends on the amount and quality of the data you can provide.

This service used to be only available to a select group until very recently, but is now available to everyone. You can sign up for Forecast here.

🚀 Takeaway

If you want to bring machine learning to your customers but are held back by a lack of understanding, Amazon offers out-of-the-box services to add intelligence to your applications. These services, trained and used by Amazon, can help your business grow and can give a personal experience to your customers, without any prior knowledge on machine learning.

What others have also read

Like every year, Amazon held its AWS re:Invent 2021 in Las Vegas. While we weren’t able to attend in person due to the pandemic, as an AWS Partner we were eager to follow the digital event. Below is a quick rundown of our highlights of the event to g

Read more

The world is rapidly changing, both from a technological and environmental point of view. Often, these challenges go hand in hand. For example, through the push towards electric vehicles, smart homes and sustainable energy. But while there has been a

Read more

In the fast-moving world of IT, ACA Group constantly takes the time to explore innovative solutions and tools to provide the best services to our customers. Recently, we shared our experience with Flux, a CloudNative Continuous Deployment tool that i

Read moreWant to dive deeper into this topic?

Get in touch with our experts today. They are happy to help!

Want to dive deeper into this topic?

Get in touch with our experts today. They are happy to help!

Want to dive deeper into this topic?

Get in touch with our experts today. They are happy to help!

Want to dive deeper into this topic?

Get in touch with our experts today. They are happy to help!